Due to the recent supply-chain attack on Solarwinds products, I wanted to put down a few thoughts on the role of open source software and security. It is kind of a rambling post and I’ll probably lose all three of my readers by the end, but I found it interesting to think about how we got here in the first place.

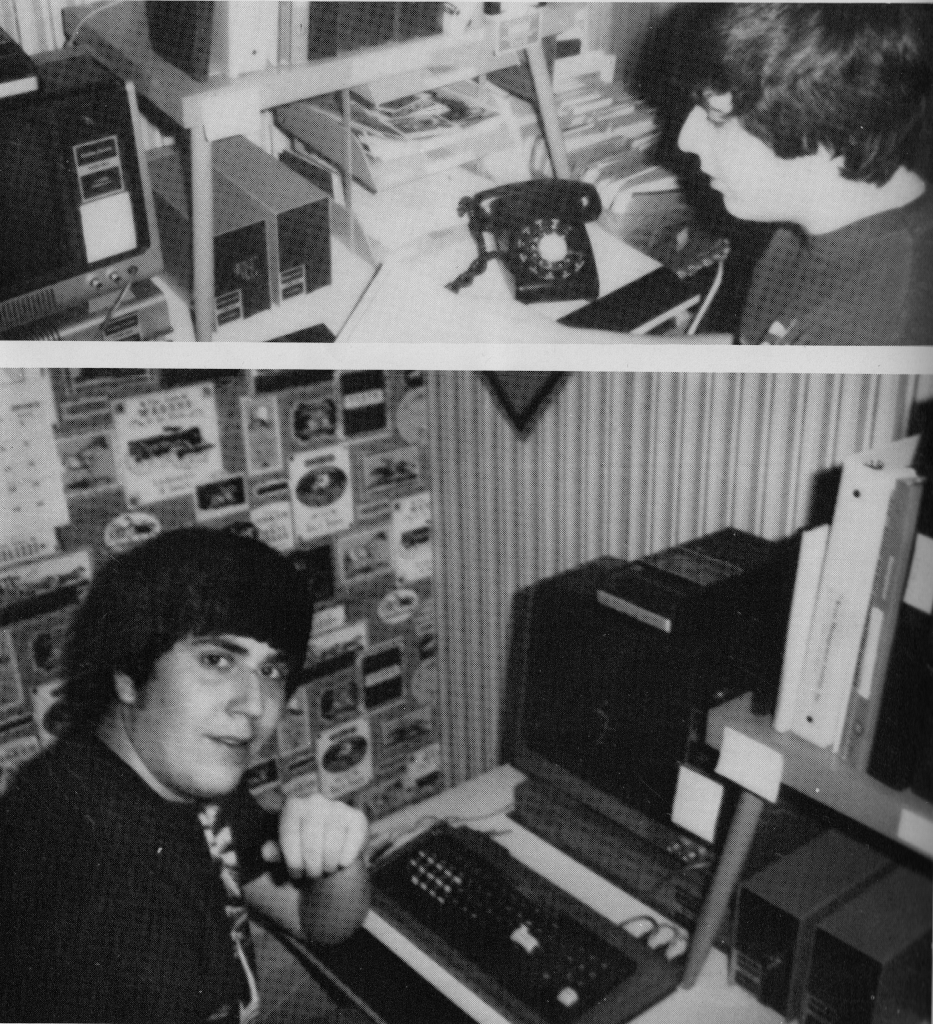

I got my first computer, a TRS-80, as a Christmas present in 1978 from my parents.

As far as I know, these are the only known pictures of it, lifted from my high school yearbook.

Now, I know what you are thinking: Dude, looking that good how did you find the time off your social calendar to play with computers? Listen, if you love something, you make the time.

(grin)

Unlike today, I pretty much knew about all of the software that ran on that system. This was before “open source” (and before a lot of things) but since the most common programming language was BASIC, the main way to get software was to type in the program listing from a magazine or book. Thus it was “source available” at least, and that’s how I learned to type as well as being introduced to the “syntax error”. That cassette deck in the picture was the original way to store and retrieve programs, but if you were willing to spend about the same amount as the computer cost you could buy an external floppy drive. The very first program I bought on a floppy was from this little company called Microsoft, and it was their version of the Colossal Cave Adventure. Being Microsoft it came on a specially formatted floppy that tried to prevent access to the code or the ability to copy it.

And that was pretty much the way of the future, with huge fortunes being built on proprietary software. But still, for the most part you were aware of what was running on your particular system. You could trust the software that ran on your system as much as your could trust the company providing it.

Then along comes the Internet, the World Wide Web and browsers. At first, browsers didn’t do much dynamically. They would reach out and return static content, but then people started to want more from their browsing experience and along came Java applets, Flash and JavaScript. Now when you visit a website it can be hard to tell if you are getting tonight’s television listings or unknowingly mining Bitcoin. You are no longer in charge of the software that you run on your computer, and that can make it hard to make judgements about security.

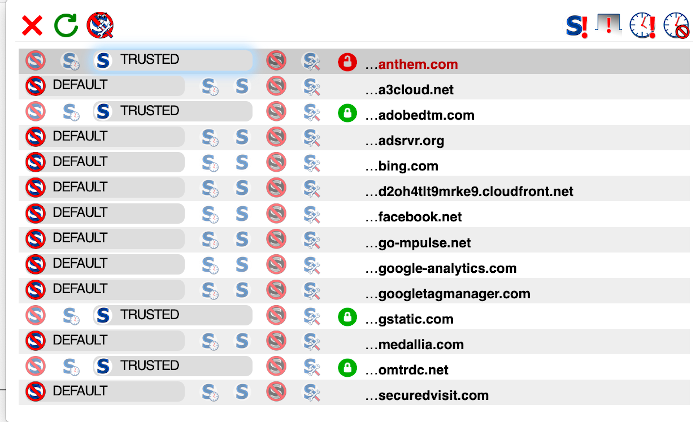

I run a number of browsers on my computer but my default is Firefox. Firefox has a cool plugin called NoScript (and there are probably similar solutions for other browsers). NoScript is an extension that lets the user choose what JavaScript code is executed by the browser when visiting a page. A word of warning: the moment you install NoScript, you will break the Internet until you allow at least some JavaScript to run. It is rare to visit a site without JavaScript, and with NoScript I can audit what gets executed. I especially like this for visiting sensitive sites like banks or my health insurance provider.

Speaking of which, I just filed a grievance with Anthem. We recently switched health insurance companies and I noticed that when I go to the login page they are sending information to companies like Google, Microsoft (bing.com) and Facebook. Why?

I pretty much know the reason. Anthem didn’t build their own website, they probably hired a marketing company to do it, or at least part of it, and that’s just the way things are done, now. You send information to those sites in order to get analytics on who is visiting your site, and while I’m fine with it when I’m thinking about buying a car, I am not okay with it coming from my insurance company or my bank. There are certain laws governing such privacy, with more coming every day, and there are consequences for violating it. They are supposed to get back to me in 30 days to let me know what they are sending, and if it is personal information, even if it is just an IP Address, it could be a violation.

I bring this up in part to complain but mainly to illustrate how hard it is to be “secure” with modern software. You would think you could trust a well known insurance company to know better, but it looks like you can’t.

Which brings us back to Solarwinds.

Full disclosure: I am heavily involved in the open source network monitoring platform OpenNMS. While we don’t compete head to head with Solarwinds products (our platform is designed for people with at least a moderate amount of skill with using enterprise software while Solarwinds is more “pointy-clicky”) we have had a number of former Solarwinds users switch to our solution so we can be considered competitors in that fashion. I don’t believe we have ever lost a deal to Solarwinds, at least one in which our sales team was involved.

Now, I wouldn’t wish what happened to Solarwinds on my worst enemy, especially since the exploit impacted a large number of US Government sites and that does affect me personally. But I have to point out the irony of a company known for criticizing open source software, specifically on security, to let this happen to their product. Take this post from on of their forums. While I wasn’t able to find out if the author worked at Solarwinds or not, they compare open source to “eating from a dirty fork”.

Seriously.

But is open source really more secure? Yes, but in order to explain that I have to talk about types of security issues.

Security issues can be divided into “unintentional”, i.e. bugs, and “intentional”, someone actively trying to manipulate the software. While all software but the most simple suffers from bugs, what happened to the Solarwinds supply chain was definitely intentional.

When it comes to unintentional security issues, the main argument against open source is that since the code is available to anyone, a bad actor could exploit a security weakness and no one would know. They don’t have to tell anyone about it. There is some validity to the argument but in my experience security issues in open source code tend to be found by conscientious people who duly report them. Even with OpenNMS we have had our share of issues, and I’d like to talk about two of them.

The first comes from back in 2015, and it involved a Java serialization bug in the Apache commons library. The affected library was in use by a large number of applications, but it turns out OpenNMS was used as a reference to demonstrate the exploit. While there was nothing funny about a remote code execution vulnerability, I did find it amusing that they discovered it with OpenNMS running on Windows. Yes, you can get OpenNMS to run on Windows, but it is definitely not easy so I have to admire them for getting it to work.

I really didn’t admire them for releasing the issue without contacting us first. Sending an email to “security” at “opennms.org” gets seen by a lot of people and we take security extremely seriously. We immediately issued a work around (which was to make sure the firewall blocked the port that allowed the exploit) and implemented the upgraded library when it became available. One reason we didn’t see it previously is that most OpenNMS users tend to run it on Linux and it is just a good security practice to block all but needed ports via the firewall.

The second one is more recent. A researcher found a JEXL vulnerability in Newts, which is a time series database project we maintain. They reached out to us first, and not only did we realize that the issue was present in Newts, it was also present in OpenNMS. The development team rapidly released a fix and we did a full disclosure, giving due credit to the reporter.

In my experience that is the more common case within open source. Someone finds the issue, either through experimentation or by examining the code, they communicate it to the maintainers and it gets fixed. The issue is then communicated to the community at large. I believe that is the main reason open source is more secure than closed source.

With respect to proprietary software, it doesn’t appear that having the code hidden really helps. I was unable to find a comprehensive list of zero-day Windows exploits but there seem to be a lot of them. I don’t mean to imply that Windows is exceptionally buggy but it is a common and huge application and that complexity lends itself to bugs. Also, I’m not sure if the code is truly hidden. I’m certain that someone, somewhere, outside of Microsoft has a copy of at least some of the code. Since that code isn’t freely available, they probably have it for less than noble reasons, and one can not expect any security issues they find to be reported in order to be fixed.

There seems to be this misunderstanding that proprietary code must somehow be “better” than open source code. Trust me, in my day I’ve seen some seriously crappy code sold at high prices under the banner of proprietary enterprise software. I knew of one company that wrote up a bunch of fancy bash scripts (not that there is anything wrong with fancy bash scripts) and then distributed them encrypted. The product shipped with a compiled program that would spawn a shell, decrypt the script, execute it and then kill the shell.

Also, at OpenNMS we rely heavily on unit tests. When a feature is developed the person writing the code also creates code to “test” the feature to make sure it works. When we compile OpenNMS the tests are run to make sure the changes being made didn’t break anything that used to work. Currently we have over 8000 of these tests. I was talking to a person about this who worked for a proprietary software company and he said, “oh, we tried that, but it was too hard.”

Finally, I want to get back to that other type of security issue, the “intentional” one. To my understanding, someone was able to get access to the servers that built and distributed Solarwinds products, and they added in malware that let them compromise target networks when they upgraded their applications. Any way you look at it, it was just sloppy security, but I think the reason it went on for so long undetected is that the whole proprietary process for distributing the software was limited to so few people it was easy to miss. These kind of attacks happen in open source projects, too, they just get caught much faster.

That is the beauty of being able to see the code. You have the choice to build your own packages if you want, and you can examine code changes to your hearts content.

We host OpenNMS at Github. If you check out the code you could run something like:

git tag --list

to see a list of release tags. As I write this the latest released version of Horizon is 26.0.1. To see what changed from 26.0.0 I can run

git log --no-merges opennms-26.0.0-1 opennms-26.0.1-1

If you want, there is even a script to run a “release report” which will give you all of the Jira issues referenced between the two versions:

git-release-report opennms-26.0.0-1 opennms-26.0.1-1

While that doesn’t guarantee the lack of malicious code, it does put the control back into your hands and the hands of many others. If something did manage to slip in, I’m sure we’d catch it long before it got released to our users.

Security is not easy, and as with many hard things the burden is eased the more people who help out. In general open source software is just naturally better at this than proprietary software.

There are only a few people on this planet who have the knowledge to review every line of code on a modern computer and understand it, and that is with the most basic software installed. You have to trust someone and for my peace of mind nothing beats the open source community and the software they create.