Once again I have to apologize for the light blogging. That will change this week I believe. I have been busy with other writing projects as well as switching to a Linux desktop. It took longer than I thought it would.

As I mentioned earlier, I am working to move away from Apple products. While I wouldn’t classify myself as a rabid fanboy, I do self identify with the fanboy label, so this is a pretty big decision for me.

Before I go any further, I realize that the three people who read this blog are technologists and probably have some of their self identity, if not self worth, tied into their technology choices. My move away from Apple is a personal decision based on a number of factors, but I won’t be teasing or disparaging those who decide to stick with using Apple products. In addition, I’m going to discuss some even more controversial choices I made when it comes to using Linux, and again these are choices that work for me and do not mean that those who chose differently “suck”, to use the vernacular.

To recap, with the release of OS X Lion I’ve seen my beloved Apple move to even more strongly lock down the user experience than ever before. I believe this is in preparation to get rid of OS X altogether, and to move even MacBooks to iOS. As I have tied up a lot of information in somewhat proprietary Apple formats, I felt it was time to move, now or never.

I have two initial goals. First, I want to move to a Linux desktop environment, and I want to do it in such a way that I don’t give up anything I had when running OS X. This is important: I am working under the hypothesis that open source desktop solutions can compete with Apple feature for feature. Now I am not expecting to be able to upgrade the firmware on my iPhone 4 using Linux, but I want the same convenience I’ve come to expect from OS X, as well as a pretty and polished interface.

Second, I want to do this with minimal hardware changes. My target system is an early 2009 24-inch iMac with 4GB of RAM and an NVIDIA graphics card.

My plan was to remain on OS X Snow Leopard and just switch everything to FOSS applications. This didn’t work for a number of reasons. While the UNIX basis for OS X makes it possible to port most of the open source tools I want, they often don’t fit very well and seem to clash with the O/S, unlike the native apps. Plus, there’s the whole “in it for a penny, in it for a pound” aspect – if I am serious about changing, I should just do it.

When it comes to operating systems, the most “free” distro out there is Debian. I run Debian on more than half of my servers. Unfortunately, native Debian is a poor choice for a desktop, especially on proprietary hardware like my iMac. While I have no doubt that I could get things to a useable state with Debian, one of my stated goals is easy of use, and from the desktop standpoint Debian ain’t it.

So that left Fedora and Ubuntu. Both are projects controlled by corporate interests (Red Hat and Canonical) and most of my freetard friends prefer Fedora from a “freedom” standpoint. Also, Red Hat is just a few miles down the road from the OpenNMS offices, so I have a soft spot for them. I decided that my first choice would be Fedora 15.

Note: Before ya’ll start bringing up Mint and Pinguy, remember that I really want ease of use over time, and for this I am leaning toward distros sponsored by larger organizations than those two, even if they are based on Ubuntu.

The next thing I had to decide was which desktop option I should choose: GNOME or KDE. Fedora supports GNOME 3, but the last time I used a Linux Desktop (circa 2001/2002) I liked KDE. In reading about GNOME 3 vs. KDE 4, it seems that most people prefer KDE. Remember, I wasn’t vested in either, it is just that I had fond memories of using Konsole back in the day.

Getting Fedora installed was not easy. I believe most of my issues arose from the NVIDIA card, but by using the “basic graphics” option I was able to get the installer to run.

On a side note, I am a huge fan of the Apple Time Machine backup solution. It has saved my hide more than once, and I knew that I could futz around with the disk and partitions all I wanted I still get my system back.

The installer was pretty easy. I especially liked the option to encrypt a partition at install (versus trying to figure it out later). I set up four partitions – swap, /boot, root and /home. I figured having a separate /home partition will make things easier in the future with upgrades, etc.

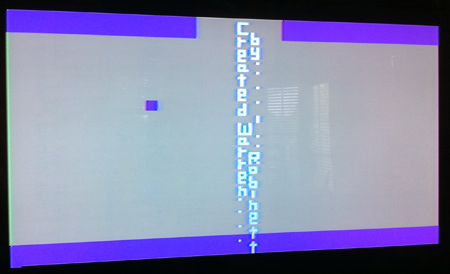

Once I got Fedora 15 installed, I rebooted and saw a sight that would plague me for days: a little blinking “underline” cursor. The system would not even try to boot.

At this point I had kind of violated my “ease of use” goal, so I decided to drop Fedora and download Ubuntu. The problem was that Ubuntu wouldn’t even get to the installer part – it just sat at the blinking underline as well.

Between a lot of searching online and fiddling with grub options (specifically “nomodeset”) I managed to get Fedora to boot. I then uninstalled the nouveau NVIDIA driver and downloaded the proprietary one from the RPMFusion repository.

Here’s where I hit another snag. Fedora has recently upgraded to the 3.0 Linux kernel (although they number it 2.40). RPMFusion has yet to upgrade all of its packages to support this kernel, so I had to play around with the kernel modules that build on the fly versus the prebuilt options (akmod vs. mod).

Another side note: while at this point I was extremely frustrated, I was also having some fun. It has been awhile since I had to learn about the internals of my operating system.

Finally, I got KDE to boot. It was different than I remembered, but I picked things up pretty easily. Here’s where I hit two more snags.

I launched Konsole and the first thing that struck me was that it was ugly. The default font looked like something created by a 9-pin Epson printer from 20 years ago. I was so used to having beautifully smooth, anti-aliased fonts that it was a shock to see something so much worse, and it violated one of my rules that I shouldn’t have to give anything up to switch to Linux.

But I figured that would be a problem I could address later. My next task was to get my Apple Magic Mouse to work over Bluetooth.

This was pretty easy in KDE. There was a little Bluetooth configuration widget. It saw my mouse and paired flawlessly. In a minute or so I had a listing for my mouse and a little green dot next to it.

The only problem was that it wouldn’t do any of the sort of things that you expect from a mouse, such as moving the mouse pointer.

More searching seemed to indicate that the kernel upgrade broke Magic Mouse support. By this time my Screw It meter had pegged, and in a fit of pique I based the system and restored OS X. Then I went home.

I had spent a couple of days playing with this (making backups and restores taking up most of that time – luckily I had my laptop so I could actually get some real work done) and I was ready to give up.

I couldn’t stop thinking about it, however, and as I tossed and turned trying to sleep that night I decided that I hadn’t given Ubuntu a fair shot. I remembered that I had some issues installing 64-bit Ubuntu on Apple hardware in the past, so maybe I should try 32-bit. It violated my rule about giving things up, but I figured it would only cost me some time and a blank CD to try.

I went into the office on Friday, downloaded the 32-bit version of 11.04 (Naughty Nightnurse) and booted to it.

Same blinking cursor. Grrrr.

When I downloaded the image I noticed one labelled “alternate” on the website. Upon searching I found that this was an alternate installer that might address my graphics card problem. I downloaded the 64-bit version and lo and behold it worked.

The Ubuntu installer is different from the Fedora one, especially in the disk layout section. Whereas Fedora just has a checkbox next to “encrypt this volume” Ubuntu requires you to first create the partition and then create another encrypted volume that ties to it. Once I got past that part I found that Ubuntu adds the option to just encrypt your home directory on a per user basis (a la FileVault) which is cool, but since I had already chosen to encrypt the whole /home partition it yelled at me about the fact that swap partition was unencrypted and that was a security risk (as passphrases could be cached in swap). So I hit the option to also encrypt swap and moved on.

When I got to the part about installing grub, I couldn’t remember which partition was /boot, so I back tracked through the installer to see where it was. Unfortunately, this caused the newly encrypted swap partition to get corrupted, and nothing I could do through the installer would let me delete it. I couldn’t delete the partition itself without deleting the encrypted volume, and it wouldn’t let me do that due to the corruption.

Arrrrgh.

At this point I put the iMac under my arm and went home. Using an older Fedora boot disk I was able to remove the Linux partitions (I tried booting back to OS X but the Apple Disk Utility has serious issue dealing with ext 4 partitions). I then redid the Ubuntu install, choosing just to encrypt my home directory, and it completed without incident.

Reboot … and I’m staring at a blinking cursor once again. Not giving in to despair this time, I added the “nomodeset” option to grub and voilá, I was looking at an Ubuntu Desktop.

It complained about 3D not being enabled and thus this “Unity” interface could not load, but it was extremely easy to install the proprietary NVIDIA driver from the additional hardware installer, which also removed the nouveau driver. Crossing my fingers, I rebooted once more.

Let me say this, Ubuntu is freakin’ gorgeous.

Once I had the proper hardware driver, Unity came up in all of its glory and I really liked it. Remember, I don’t really have a horse in the race of KDE vs. GNOME, and the Unity Launcher reminded me of the OS X dock. The purple and orange theme was easy on the eyes while distancing itself from the muted pastel blues favored by Apple. The fonts were beautifully smooth, and I loved the fact that it was extremely easy to install new software, and the fact that when installing something like Thunderbird it came with a theme that fit in well with the rest of the system.

I still had a weekend worth of work before I could even come close to having what I needed to make Ubuntu my working desktop, but after spending four days playing with installing a Linux desktop I was so extremely happy to have something I could live with, and something that I could use for the basis of testing out the rest of my #noapple hypothesis. I am still running OS X on my MacBook Air and my iMac at home, but I think I’ll wait to 11.10 Onanistic Oliphant is released before upgrading those. It’s only a couple of months away and that will give me time to get real comfortable with Ubuntu.

I’ll write more about individual issues and applications later.